What chatGPT is good and bad at

I ❤️ Large Language Models (LLM): I’m in the camp of “chatGPT is the first AGI”. This doesn’t mean there aren’t still strong limitations and pitfalls to avoid. Knowing the strengths and limitations of LLMs is crucial to understand how this powerful tool can be used to simplify our work.

LLMs operate solely and exclusively on statistical principles without relying on any internal symbolic representation of knowledge — they have no built-in Knowledge Graph. This makes them great at mimicking humans, not so good with accurate referencing. Recently a lawyer got in trouble for citing 6 non-existent cases hallucinated by chatGPT.

In which industries is “mimicking humans” the bottleneck? I can’t answer this question fully (and we’ll find out soon enough anyways), but I can talk about one field I’m familiar with.

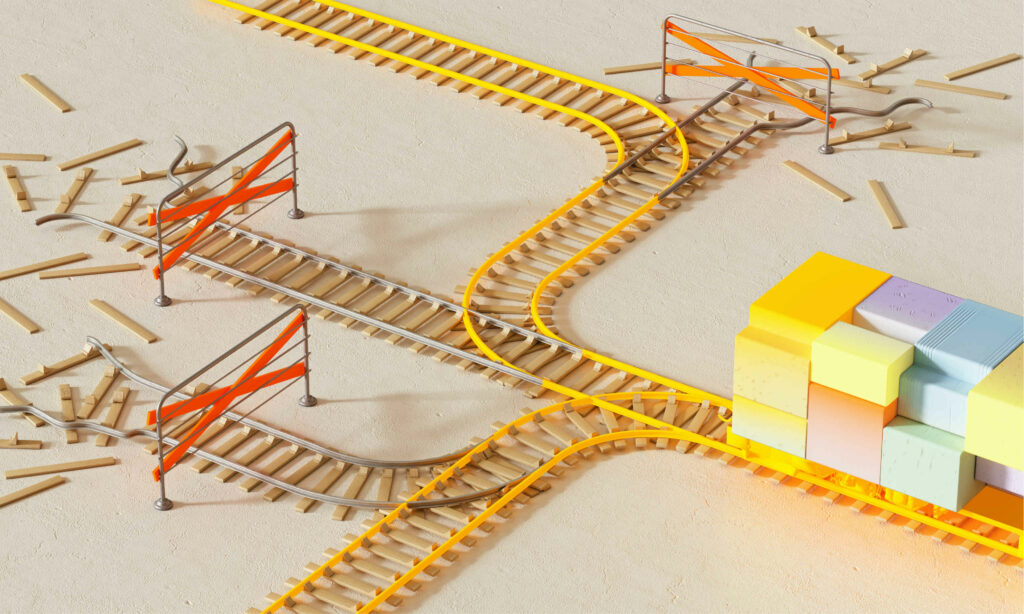

Data Engineering is hard

Data Science is rarely the problem. This is the first thing everyone learns when working with data teams. Most of the effort is spent on Data Engineering (DE): acquiring, cleaning, storing and later querying data. To avoid headaches down the road you want to spend some time designing this process such that it is resilient and scalable. When the amount of data is large, the way you organize the storage (model design) becomes very important. All this takes time and effort.

As someone that likes to strive to write clean code, DE can sometimes feel icky. Processing data with code means taking care of the numerous special cases and idiosyncrasies of the specific dataset. These are often beyond your control — you just have to deal with it. Think adapting a Selenium scraping script to small variations in the HTML or standardizing some text data in a Varchar row to a common format. Code must account for these variations, often by hard-coded rules that make you feel dirty.

It is no wonder then that the DE industry is thriving — where there is a problem there is a business opportunity. Lots of low-code solutions (Databrick’s Prophecy, Meltano and Dataiku’s DSS to name a few off the top of my head) are emerging, trying to simplify and automate DE tasks.

How LLMs will help with DE

I can see LLMs being used in a variety of ways for the boring parts of DE. Here are some ideas:

Data catalog. Given a bunch of disparate data sources and a business context, LLMs could be used to generate structured documentation on what is found where and in which format. Sensible column names and comments could be used to make reasonable assumptions by the LLM, just like a human would.

Business Intelligence metrics. Analysts could describe the metrics and KPI they need to monitor in plain English and have the LLM generate SQL code for it. Of the ideas discussed here, this is probably the lowest hanging fruit — I would be surprised if someone is not already offering this service. LlamaIndex’s Text-to-SQL could be used.

Data cleaning and standardization. When migrating data or consolidating data from multiple sources, it can happen that some simple transformations need to be applied to conform all the data to the same format. By simply stating an example of the desired format, LLMs could generate the appropriate SQL code.

Data modelling. Given the business requirements and the source data formats, LLMs could be used to generate SQL code for an appropriate data warehouse schema.

These last two are harder because — in their trivial implementation — would require the LLM to process all the incoming data. This would get very expensive fast.

Data deduplication. Often one has to deal with duplicated data in a database. This is not always trivial to remove: there can be rows that are “the same” for a human but not for SQL (e.g. text can use spaces instead of tabs). LLMs could easily catch these instances and mark them as duplicate.

Anomaly detection. ML is already being used in DE by companies like Anomalo to check for anomalous changes in data volume, freshness, null values or key metrics. This can be an invaluable tool to monitor real time pipeline like for example the ones used in the fintech industry. LLMs could add another layer to these tools, looking at the actual meaning of the data. Of course this would be particularly effective on text data.

Conclusion

DE is hard and often requires for ad-hoc code changes to deal with the idiosyncrasies of the specific dataset. LLMs could offer a big help in assisting and eventually automating these tasks. I think it’s very reasonable to expect that LLMs will soon be used in areas such as data catalog, developing BI metrics, data cleaning as well as more advanced use-cases such as anomaly detection. The big players to watch out for are those with established low-code DE solutions, especially those already intimate with ML such as Anomalo.